ROSMASTER R2L is a robot with Ackerman steering structure, designed for automatic driving. It supports the Jetson NANO/Xavier NX/TX2 NX board and is developed based on ROS and Ubuntu operating systems. We apply 2MP high-definition camera and combine some recognition algorithms to achieve model training, autopilot, object recognition, tag tracking and other functions. Like other Yahboom robot cars, R2L supports APP/handle emote control. We have provided 80 courses with Chinese and English subtitles, which can help users to get started with the model training of automatic driving and ROS operating system.

(Max Speed:1.8m/s)

Packing List

Ackerman steering chassis*1

520 gear motor 550RPM *2

Light bar fixing plate *1

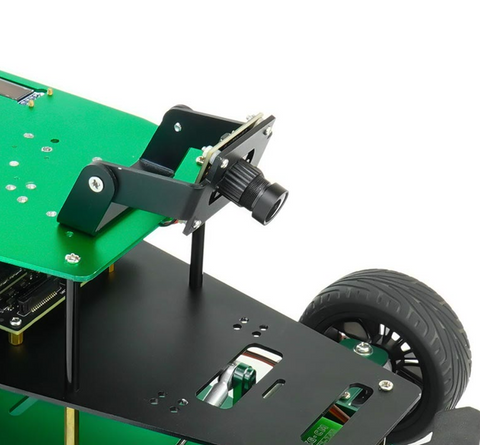

Camera fixing plate *1

HD camera *1

Racing rubber wheels *4

White glue light guide *1

Screwdriver *1

Parts package *3

ROScar expansion board*1

6000MAH 12.6V power battery and battery charger *1

Game handle and handle phone holder *1

Network card antenna)

Several cables

Data cable*1

Several connection line *N

Autopilot track (3.2m*2.8m) *1 (Just for Advanced Kit)

Traffic light model *1 (Just for Advanced Kit)

lron signboard * 5 (Just for Advanced Kit)

Traffic sign sticker *10 (Just for Advanced Kit)

High quality aviation aluminum box *1 (Just for Advanced Kit)

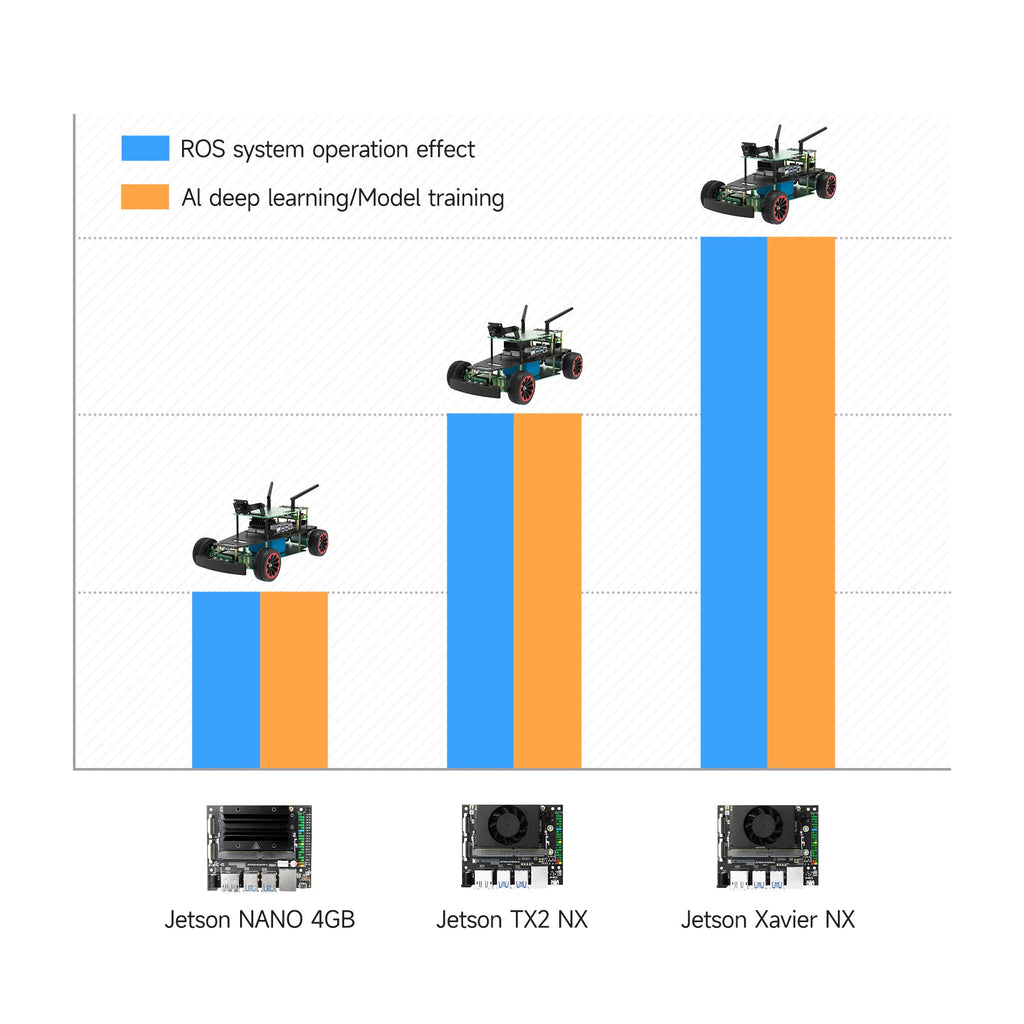

There are 3 option for choice

Jetson Nano version:

Jetson Nano 4GB SUB board (optional)

64GB system U disk(with robot system) *1

Jetson Nano accessory package *1

TX2-NX version:

TX2-NX board (optional)

128GB SSD (with robot system) *1

TX2-NX accessories package *1

Xavier NX version:

Xavier NX 8GB SUB board (optional)

128GB SSD (with robot system) *1

Xavier NX accessory package *1

【Top Hardware Configuration】

★ Compatible with Jetson NANO 4GB/Xavier NX/TX2 NX

The main controller of ROSMASTER R2L adopts Nvidia Jetson series boards, all of which use ubuntu system. Users can select the appropriate main control board according to their actual needs.

★Ackerman Chassis

Ackerman steering is a modern vehicle steering mode. ROSMASTER R2L uses an aluminum alloy Ackerman chassis structure. When the R2L trolley turns, the inside and outside wheels can turn at different angles. The turning radius of the inner tire is smaller than that of the outer tire.

★8m*3.2m Automatic Driving Tracking Map

Since ROSMASTER R2L has Ackerman steering structure, it is very suitable for automatic driving scenarios. We designed a line patrol map for R2L, and include some accessories such as traffic signs and guide signs.

Users can realize traffic sign recognition and detection by setting automatic driving scenarios, training models and deploying Darknet YOLO.

This map is made of high-quality UV printed canvas, with bright colors, corrosion resistance, mildew resistance, stain resistance, and crease recovery.

★ HD camera

We have replaced the depth camera with a small high-definition camera with a high cost performance ratio, which can not only realize automatic driving and AI visual recognition functions, but also reduce the cost so that more users can afford it.

!!! Note: Support user-defined color selection, and the robot can automatically identify the color area for line patrol.

【Inspiring Remote Control Method】

It not only supports iOS/Android dual system playing APP control, but also provides map building navigation APP to meet the requirements of multi scene APP control.

The robot kit includes a game controller, which can experience the feeling of FPV in combination with the mobile phone APP.

◆◆◆Automatic driving operation process

Step1: Training model

Use yolov5 to train the model and identify the model: R2L has installed yolov5 environment, which can train and identify the model. You only need to import training pictures, and then load the model to identify after training.

R2L has also built the Jetracer framework. Through training road pictures, exporting models and other steps, automatic driving can also be realized on the sand table.

Step2: Complete automatic driving

- Go straight

- Turning

- Traffic light identification

- Parking

Summary

Compared with the existing R2 car, R2L is equivalent to its simplified version. It only contains a small high-definition camera, excluding radar, depth camera and 7-inch screen. It is mainly applicable to automatic driving scenes, rather than AI vision or radar mapping navigation, robot arm simulation and other scenes.